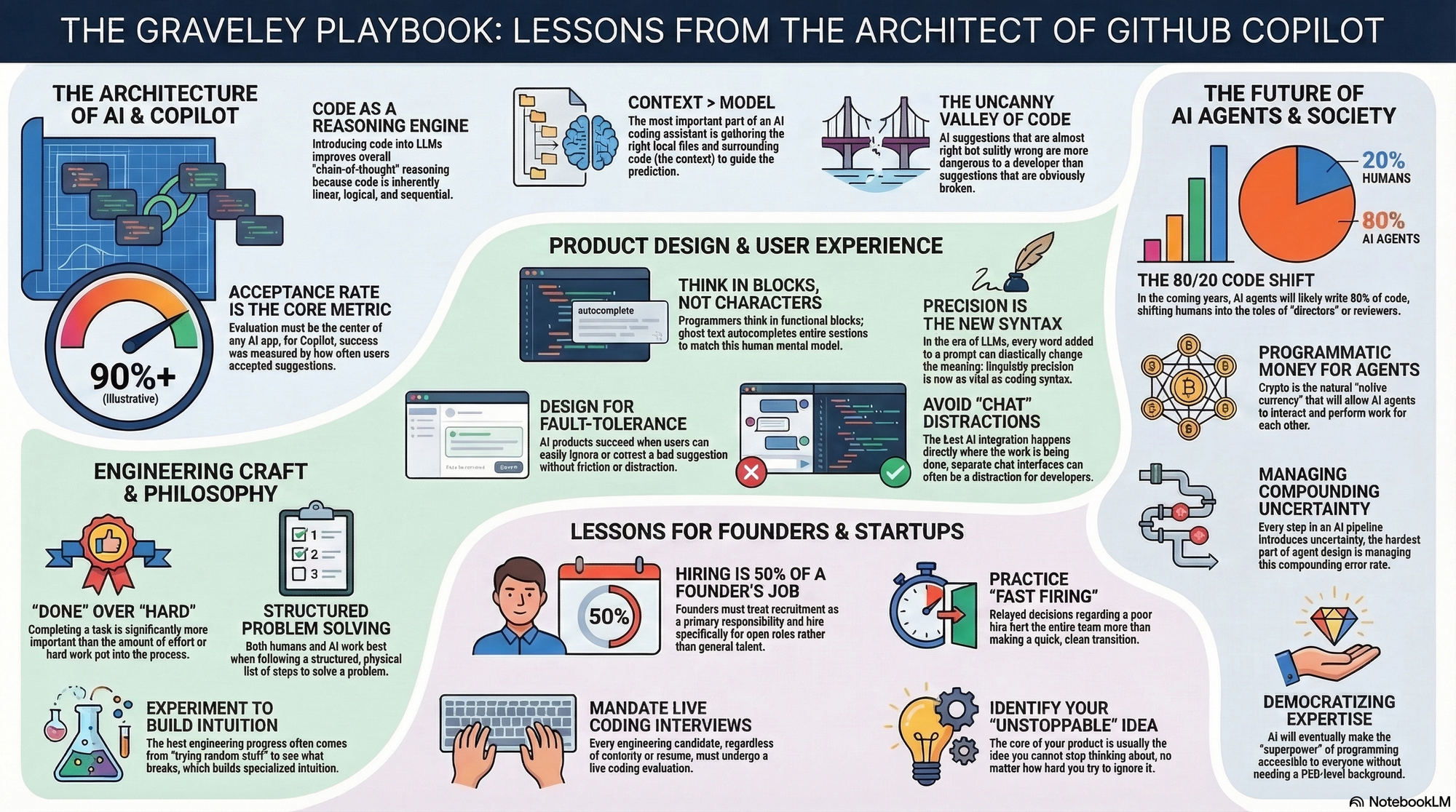

Alex Graveley is the visionary software engineer and architect behind some of the most transformative developer tools of the last decade, most notably serving as the Chief Architect of GitHub Copilot. From his early roots in the open-source GNOME project to founding Hackpad (acquired by Dropbox) and Minion AI, Graveley’s career has focused on reducing the friction between human intent and machine execution.

Part 1: The Architecture of AI & Copilot

- On Code as Reasoning: "Introducing code into Large Language Models improved their overall 'chain-of-thought' reasoning because code is inherently linear, logical, and sequential." — Source: No Priors Podcast

- On Baseline Evaluation: "Evaluation must be the core of any AI application; for Copilot, our primary metric was the acceptance rate of suggestions by the user." — Source: Building with AI

- On Training Artifacts: "The first version of Copilot was essentially a 'training artifact' from a small sample of GitHub data, and it was quite bad before we iterated on prompting." — Source: No Priors Podcast

- On Latency as a Feature: "Speed is a critical feature for AI; network latency significantly impacts user adoption because completions must feel instantaneous to stay in the flow." — Source: Vertex AI Search - Alex Graveley

- On Model Malleability: "Engineers should have a crisp understanding of the difference between pre-training and post-training to know when a model can be generalized." — Source: Building with AI

- On Determinism: "Unlike traditional computers which are deterministic, LLMs offer no guarantees; you have to develop a specialized intuition for how they will behave." — Source: No Priors Podcast

- On Context Windows: "The most important part of an AI coding assistant isn't just the model, but the context—gathering the right local files and surrounding code to guide the prediction." — Source: Alex Graveley Blog

- On the Uncanny Valley of Code: "AI suggestions that are almost right but subtly wrong are more dangerous than suggestions that are obviously broken." — Source: No Priors Podcast

- On Collaborative Filtering: "The power of Copilot comes from the fact that it has seen how millions of other developers solved similar problems in the past." — Source: YouTube - GitHub Copilot Architect

- On Tiny Teams: "Great leaps in technology often come from a tiny team working in the face of widespread skepticism that the idea can even work." — Source: No Priors Podcast

Part 2: Product Design & User Experience

- On Thinking in Blocks: "Programmers don't think in characters or lines; they think in functional blocks. Ghost text was designed to autocomplete entire blocks to match human mental models." — Source: No Priors Podcast

- On Fault-Tolerant UX: "AI products succeed when the UX is fault-tolerant; users should be able to easily ignore or correct a bad suggestion without friction." — Source: Building with AI

- On Reducing Friction: "The goal of any great tool is to minimize the effort between a user's intent and the final execution of that intent." — Source: CryptoSlate Interview

- On Iterative Interfaces: "AI enables a new kind of iterative interface where the software and the user co-create the final output through a series of suggestions." — Source: Building with AI

- On Real-Time Collaboration: "In products like Hackpad, the 'feel' of the real-time sync is more important than the specific features of the editor itself." — Source: Alex Graveley Blog

- On Avoiding Chat: "For development, the 'chat' interface is often a distraction; the best AI integration happens directly where the work is already being done." — Source: No Priors Podcast

- On User Trust: "Trust in an AI system is built by asking difficult questions of the model and verifying that its 'priors' are sound." — Source: 2x Founder Mistakes

- On Semantic Meaning: "Every single word added to a prompt can drastically change the meaning for an LLM; precision in language is the new precision in syntax." — Source: No Priors Podcast

- On Predictive Engines: "Modern software is shifting from being a set of tools you use to a set of predictive engines that anticipate what you want to do next." — Source: Building with AI

- On Design Simplicity: "Keep AI systems simple because the inherent complexity of natural language already introduces enough noise into the system." — Source: No Priors Podcast

Part 3: Lessons for Founders & Startups

- On Hiring for Roles: "Do not just hire 'good people' generally; hire specifically for open roles that need to be filled right now." — Source: 2x Founder Mistakes

- On Live Coding: "You must conduct live coding interviews for every engineering candidate, regardless of their seniority or resume." — Source: 2x Founder Mistakes

- On Raising Capital: "Only raise capital when you have achieved clear confidence in your product's direction and your ability to execute." — Source: 2x Founder Mistakes

- On Remote Work: "Acknowledge that many people are simply not as effective working remotely as they are in an in-person environment." — Source: 2x Founder Mistakes

- On Learning Curves: "In a fast-paced startup, now is not the time for team members to be learning a completely new area of expertise on the job." — Source: 2x Founder Mistakes

- On Decision Speed: "Practice 'fast firing' when a hire isn't working out; delayed decisions hurt the entire team more than a quick transition." — Source: 2x Founder Mistakes

- On Personal Accountability: "Shift your internal dialogue from 'We should do this' to 'I will do this' to drive real progress." — Source: 2x Founder Mistakes

- On Founder Failure Modes: "Poor health and loneliness are the most common and overlooked failure modes for startup founders." — Source: 2x Founder Mistakes

- On Hiring Responsibility: "As a founder, hiring should be at least 50% of your actual job." — Source: 2x Founder Mistakes

- On the Core Idea: "The idea that you cannot stop thinking about, no matter how hard you try, is usually the true core of your product." — Source: 2x Founder Mistakes

Part 4: The Future of Agents & AI Society

- On the Evolution of Work: "In the next few years, AI agents will write 80% of the code, and humans will shift into the role of 'directors' or reviewers." — Source: No Priors Podcast

- On AI Dangers: "I am less worried about AI itself 'killing us' and more worried about bad people using new technology to hurt others." — Source: No Priors Podcast

- On Societal Resilience: "We need to stress-test society's resilience to new technological waves before they are fully deployed." — Source: No Priors Podcast

- On Crypto & AI Agents: "Programmatic money (crypto) is the natural 'native currency' for AI agents to interact and perform work for each other." — Source: No Priors Podcast

- On Identity Security: "Cryptography is essential for securing identity to help humans differentiate between real people and AI-driven agents." — Source: No Priors Podcast

- On the Agentic Shift: "The next phase of AI isn't just generating text, but 'doing stuff for people' by navigating the web and interacting with APIs." — Source: Building with AI

- On Problem Decomposition: "To make AI agents effective, we must teach them to break down complex tasks exactly how a human does." — Source: Building with AI

- On Compounding Uncertainty: "Every step in an AI pipeline introduces uncertainty; managing that compounding error rate is the hardest part of agent design." — Source: Building with AI

- On democratizing Expertise: "AI allows people to contribute to complex fields like programming without needing a PhD-level background." — Source: Building with AI

- On the Future of Programming: "Programming is the highest form of reasoning, and AI will eventually make this superpower accessible to everyone." — Source: No Priors Podcast

Part 5: Engineering Craft & Open Source Philosophy

- On the 'Just Try It' Mentality: "The best engineering advice is often to just try random stuff and see what breaks; experimentation leads to intuition." — Source: Building with AI

- On Open Source Ethics: "Open-source practices are vital for AI ethics because they allow for continuous peer review and transparency." — Source: North Penn Now Interview

- On Community Vigilance: "The community’s ability to find and fix bugs in open-source AI often outweighs the risks associated with exposing the code." — Source: North Penn Now Interview

- On Global Values: "AI models should be built with input from a global community to ensure they reflect a diverse array of values, not just one company's." — Source: North Penn Now Interview

- On Inclusivity: "Prioritizing inclusivity in training data is the only way to systematically mitigate the risk of algorithmic bias." — Source: North Penn Now Interview

- On Shipping: "Completing a task ('Done') is more important than how much hard work you put into it." — Source: 2x Founder Mistakes

- On Asking for Help: "Asking for help is a sign of strength and a way to accelerate your learning, especially in rapidly evolving fields." — Source: 2x Founder Mistakes

- On Continuous Learning: "The 'unknown' is a great place to be because it means no one has the answer yet, and you have as good a shot as anyone." — Source: Building with AI

- On Structural Problem Solving: "Always make a structured list of things to go through when solving a problem; it works for both humans and AI." — Source: Building with AI

- On Appreciation: "Don't forget to stop and smell the roses; the process of building is just as important as the final product." — Source: 2x Founder Mistakes