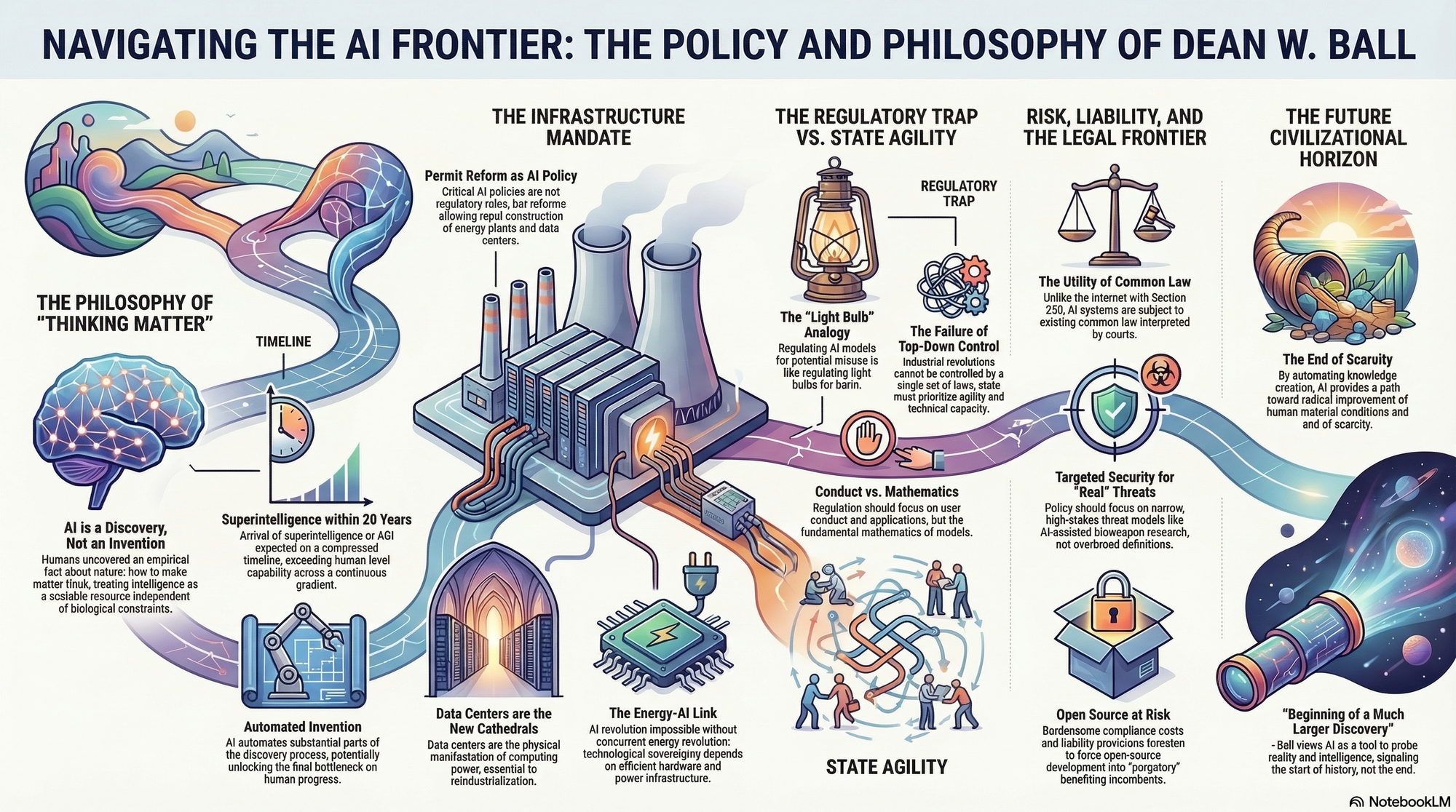

Dean W. Ball is a leading voice in technology policy and a Research Fellow at the Mercatus Center at George Mason University, where he explores the intersection of artificial intelligence, governance, and reindustrialization. Through his newsletter Hyperdimensional and extensive policy analysis, he advocates for a future where the state prioritizes technological diffusion and infrastructure over restrictive, model-level regulation.

Part 1: The Philosophy of Machine Intelligence

- On the Nature of AI: "AI should be understood primarily as a discovery rather than an invention, as an empirical fact about nature that humans have, through monumental effort, uncovered. We have discovered how to make matter think." — Source: Hyperdimensional

- On Automated Invention: "Thinking is, at least in part, the ability to solve problems, and in so doing to discover new knowledge. AI will allow us to automate substantial parts of the process of invention itself." — Source: Hyperdimensional

- On Superintelligence Timelines: "It is very likely some form of 'superintelligence' arrives in under 20 years." — Source: The Cognitive Revolution Podcast

- On Machine Cognition: "We are effectively creating a new kind of 'thinking matter' that operates on principles we are still trying to map empirically." — Source: AI Summer Podcast

- On Intelligence as a Resource: "Intelligence is the ultimate general-purpose resource, and AI is the first technology that allows us to scale it independently of biological constraints." — Source: Faster, Please!

- On AGI Disruption: "All of the disruption and tumult wrought by AI could happen on a more compressed timeline than I had expected." — Source: The American Heritage Institute

- On Post-Human Intelligence: "The first AGI will far exceed the vast majority of or even all humans in some important respects." — Source: The American Heritage Institute

- On the AI/Human Gradient: "Rather than a binary switch between 'dumb' and 'AGI,' we should view AI as a continuous gradient of increasing capability." — Source: AI Policy Perspectives

- On Defining Thinking: "If a system can solve novel problems and generate useful knowledge, it is performing the functional equivalent of human thought, regardless of its internal architecture." — Source: Hyperdimensional

Part 2: The Architecture of Modern Governance

- On Governing Industrial Revolutions: "There is no way to pass 'a law,' or a set of laws, to control an industrial revolution." — Source: The American Heritage Institute

- On State Agility: "Policymakers should prioritize building state agility and promoting engineering-first solutions over static regulatory frameworks." — Source: Mercatus Center

- On Top-Down Control: "AI and its effects are too vast and diffuse to be governed from the top down; we must rely on distributed adaptation." — Source: The American Heritage Institute

- On Diffusion-Centered Policy: "Policy should focus on AI diffusion—ensuring the technology reaches every corner of the economy—rather than just concentrating on the few labs at the frontier." — Source: Mercatus Center

- On Government as a Laggard: "Government probably will be a laggard in adoption... not because of democratic impulse, but because it's just really hard to adopt new technology in government." — Source: Effective Altruism Forum

- On Regulating Conduct: "We should regulate the conduct of users and the application of technology, not the fundamental mathematics of the models themselves." — Source: Mercatus Center

- On Bureaucratic Fiat: "Unpredictable rule by bureaucratic fiat is something that this country, and especially California, has had enough of." — Source: Hyperdimensional

- On the Capacity for Assessment: "It's questionable whether a state agency really has the capacity to do the kind of technical assessments required for frontier AI models." — Source: Interconnects AI

- On Global Competition: "The primary goal of AI policy should be to ensure the United States remains the global leader in development and deployment." — Source: Lawfare

- On Agentic Commerce: "Agentic commerce is 'right around the corner' but there is startlingly little discussion about it from a regulatory perspective." — Source: The Cognitive Revolution Podcast

Part 3: The Pitfalls of Precautionary Regulation

- On Path Dependency: "Regulation invites path dependency; wrong regulations, deployed too early, could freeze society into a brittle, suboptimal political and economic order." — Source: 80,000 Hours

- On SB 1047’s Legacy: "California’s SB 1047 would create a sweeping regulatory regime... and effectively outlaw all new open source AI models." — Source: Hyperdimensional

- On the 'Light Bulb' Analogy: "Regulating AI models based on how consumers might use them is like attempting to regulate light bulbs or electricity because of their potential for misuse." — Source: Mercatus Center

- On the Precautionary Principle: "Applying the precautionary principle to AI development risks strangling innovation before its benefits can even be realized." — Source: Faster, Please!

- On 'High-Risk' Definitions: "The definition of 'high-risk' AI in some legislation is comically overbroad, as almost any AI model could be used for a high-risk purpose." — Source: The American Heritage Institute

- On Open Source Purgatory: "At the very least, SB 1047 is a kind of purgatory for open models." — Source: Hyperdimensional

- On Compliance Costs: "Burdensome requirements for developers and businesses will primarily serve to entrench incumbents who can afford the compliance costs." — Source: The American Heritage Institute

- On the FDA Analogy: "These analogies of the FDA to AI are not really very good; you cannot test a general-purpose technology like a drug." — Source: Doom Debates

- On Safety Bureaucracy: "A large bureaucratic apparatus has begun to emerge around the nebulous and all-encompassing conception of AI safety." — Source: The American Heritage Institute

- On Shakespearean Risks: "My big concern is that we'll lock ourselves into some suboptimal dynamic and actually, in a Shakespearean fashion, bring about the world that we do not want." — Source: Effective Altruism Forum

Part 4: Reindustrialization and Infrastructure

- On Permitting Reform: "Reform of building permits should be a most important AI policy priority to ensure the construction of energy generation and data centers." — Source: The American Heritage Institute

- On the Power of Data Centers: "Data centers are the new cathedrals of the digital age; they are the physical manifestation of our computing power." — Source: City Journal

- On American Manufacturing: "AI’s greatest impact may not be in digital services, but in the reindustrialization of American manufacturing." — Source: American Compass Podcast

- On Energy Requirements: "We cannot have an AI revolution without an energy revolution; the two are fundamentally linked." — Source: Hyperdimensional

- On Infrastructure as Policy: "The most effective 'AI policy' isn't a new regulator, but a streamlined process for building power plants and transmission lines." — Source: Mercatus Center

- On the Physicality of Compute: "We must stop treating AI as an abstract software problem and start treating it as a physical infrastructure challenge." — Source: Lawfare

- On Supply Chain Resilience: "Promoting domestic semiconductor manufacturing is essential for maintaining technological sovereignty." — Source: American Compass Podcast

- On Urbanism and Tech: "The revitalization of our cities depends on their ability to host the high-density infrastructure required for the next generation of compute." — Source: City Journal

- On Global Hardware Dominance: "The country that builds the most efficient hardware and power infrastructure will dictate the terms of the AI era." — Source: Hyperdimensional

Part 5: AI as a Civilizational Discovery

- On Historical Precedents: "AI is a general-purpose technology comparable to the steam engine or electricity, requiring society-wide adaptation over decades." — Source: Mercatus Center

- On Techno-Optimism: "Technological progress is empirically the only way we have found to durably improve our material well-being." — Source: Hyperdimensional

- On Human Flourishing: "There are many worlds in which humans can thrive amid things that are better than them at various kinds of intellectual tasks." — Source: The Cognitive Revolution Podcast

- On the Meaning of Intelligence: "We are learning that 'intelligence' is not a mystical human quality, but a computational property of the universe." — Source: Hyperdimensional

- On Moral Choices: "The definition of 'beneficial' AI will be determined collectively over time, not by a single state agency's decree." — Source: Hyperdimensional

- On Civilizational Momentum: "Societies that embrace the uncertainty of discovery thrive; those that try to legislate it away stagnate." — Source: City Journal

- On the Future Economy: "The world is going to be totally different in 10 years, and our current economic categories will likely feel obsolete." — Source: The Cognitive Revolution Podcast

- On Knowledge Automation: "When we automate the creation of knowledge, we unlock the final bottleneck on human progress." — Source: Faster, Please!

- On the Discovery of Mind: "AI allows us to probe the nature of intelligence in the same way the telescope allowed us to probe the nature of the stars." — Source: Hyperdimensional

Part 6: The Future of State Capacity

- On Institutional Adaptation: "America and its institutions can successfully adapt to AI by taking smart strategic steps now, without needing 'pie in the sky' thinking." — Source: Lawfare

- On Regulatory Gradients: "A gradient approach to regulation is better than static rules that become obsolete the moment a new model is released." — Source: AI Policy Perspectives

- On Local vs Federal Roles: "Assessment of frontier models feels much more like a federal responsibility than something a state agency can handle." — Source: Interconnects AI

- On Catastrophic Risk: "Government is best suited to dealing with catastrophic risks, but it must be careful not to label every minor inconvenience as a catastrophe." — Source: Effective Altruism Forum

- On AI-Enabled Productivity: "The Trump AI Action Plan was not written by AI, but it's an example of an AI-enabled productivity boost—we got it done in about 3 months." — Source: The Cognitive Revolution Podcast

- On Transparency from Labs: "We need transparency from innovative AI labs to ensure a full and accurate understanding of system capabilities." — Source: The American Heritage Institute

- On Bureaucratic Complexity: "Overly broad legislation like SB 1047 generates needless complexity without significantly increasing safety." — Source: The American Heritage Institute

- On the Counter-Elite: "A rising counter-elite in tech is challenging the established political order, driven by a desire for growth and efficiency." — Source: City Journal

- On the Limits of Expertise: "Even having worked at the White House, I don't know tremendously more about what goes on inside the Frontier Labs than the public does." — Source: The Cognitive Revolution Podcast

Part 7: Risk, Liability, and the Legal Frontier

- On Common Law Utility: "Existing common law, interpreted and applied by courts, already applies to AI systems in a way that has not been true of internet services." — Source: Hyperdimensional

- On Section 230: "The lack of a liability shield for AI—unlike the Section 230 shield for the internet—is a profound difference that changes the legal landscape." — Source: Hyperdimensional

- On the NIST RMF Critique: "The NIST AI Risk Management Framework is comically overbroad and could create a legal nightmare if treated as law." — Source: The American Heritage Institute

- On Bioweapon Threats: "AI being used for bioweapon research is a real threat model that requires targeted, narrow security interventions." — Source: The Cognitive Revolution Podcast

- On Market Cap Imbalances: "We should worry about dangerous power imbalances should AI companies reach $50 trillion market caps." — Source: The Cognitive Revolution Podcast

- On Liability for Open Source: "Liability provisions that make open-source development impossible at the frontier are a direct attack on innovation." — Source: Hyperdimensional

- On Critical Infrastructure Deception: "The term 'critical infrastructure' is often used deceptively in AI bills to expand regulatory reach into benign software." — Source: The Cognitive Revolution Podcast

- On Accreditation Challenges: "Determining who is an 'accredited auditor' for AI models is a massive technical and political hurdle." — Source: Interconnects AI

- On Model Weights as Speech: "There is a strong argument that AI model weights should be treated as a form of protected speech or expression." — Source: Hyperdimensional

- On Future Litigation: "Treating AI risk frameworks as law will allow broad, predatory lawsuits that could bankrupt smaller developers." — Source: The American Heritage Institute

Part 8: Human Flourishing in the Age of Superintelligence

- On Thriving with AI: "We must focus on how humans can thrive in a world where we are no longer the most 'intelligent' entities on the planet." — Source: Doom Debates

- On Material Well-Being: "The ultimate goal of AI must be the radical improvement of human material conditions across the globe." — Source: Hyperdimensional

- On Enterprise as Conquerors: "The most successful 'conquerors' of the modern era are business enterprises, not countries." — Source: 80,000 Hours

- On Strategic Adaptation: "Successful adaptation requires smart strategic steps now, not just reacting to fear." — Source: Lawfare

- On the End of Scarcity: "If AI can automate invention, we may finally see the end of scarcity for fundamental resources." — Source: Faster, Please!

- On the Value of Human Agency: "As AI takes over cognitive labor, the value of human agency and decision-making will only increase." — Source: Hyperdimensional

- On Economic Incentives: "Near-term political and economic incentives will influence AI governance far more than hypothetical long-term risks." — Source: Effective Altruism Forum

- On Redesigning Governance: "AGI will necessitate a redesign of governance structures rather than merely adapting existing ones." — Source: Effective Altruism Forum

- On the Ultimate Lesson: "The lesson of AI is that we are not at the end of history, but at the beginning of a much larger discovery about the nature of reality." — Source: Hyperdimensional